Instagram will alert parents when teens search for suicide, self-harm content

Instagram announced Thursday that the social media platform will launch notifications to alert parents when their teens search for suicide or self-harm content repeatedly in a short time period.

"Our goal is to empower parents to step in if their teen's searches suggest they may need support," Instagram said in its announcement. "We also want to avoid sending these notifications unnecessarily, which, if done too much, could make the notifications less useful overall."

The notifications are scheduled to begin in two weeks in the U.S., U.K., Australia, and Canada and will be available to parents who are enrolled in Instagram's Parental Supervision feature and for teenagers who use Instagram Teen Accounts.

Instagram said it plans to roll out alerts to additional regions later this year.

Instagram said it consulted with experts from its Suicide and Self-Harm Advisory Group and analyzed users' search behavior on the popular social media app to determine when to push notifications to parents. The company promised to "continue to monitor and listen to feedback" to improve on the notifications process.

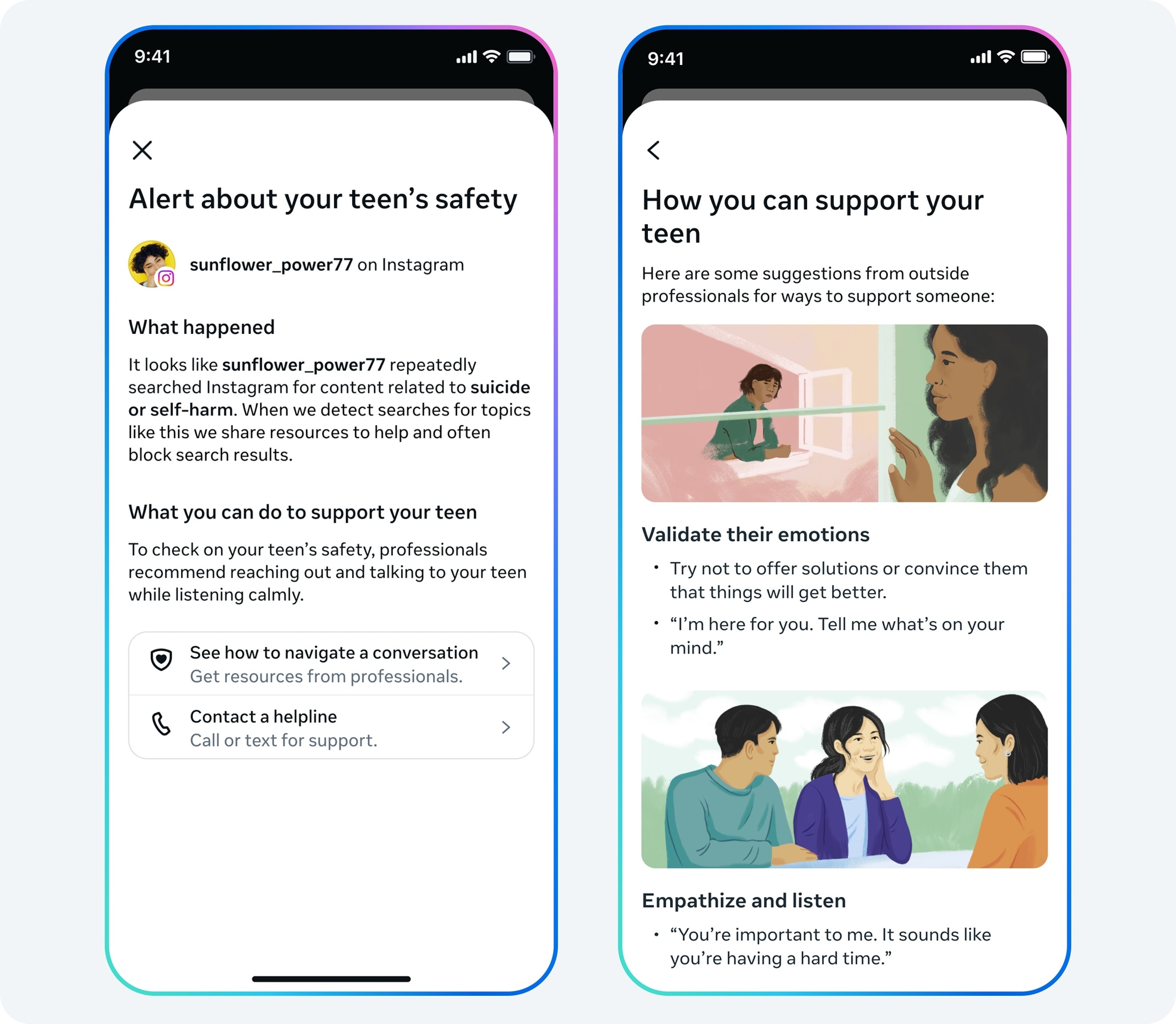

According to Instagram, the parental notifications will be sent in-app and via email, text, or WhatsApp, depending on what contact information a parent has shared, and will be triggered if "phrases promoting suicide or self-harm, phrases that suggest a teen wants to harm themselves, and terms like 'suicide' or 'self-harm'" are used in the app's search tool.

Parental notifications will also include expert resources meant to help parents have conversations with teens about the sensitive topic.

Instagram already blocks users from searching for self-harm and suicide content and directs users to local helplines and resources.

Instagram said the company is also working to introduce similar parental notifications for teens' conversations with its AI service and aims to launch those notifications later this year.

The new feature announcement comes as Instagram's parent company, Meta, is embroiled in a landmark social media trial in California over claims social media platforms like Facebook and Instagram and their features are addictive.

Last week, Meta CEO Mark Zuckerberg testified in the case and answered questions about Meta's social media platform's age restrictions, app engagement and filters. Meta also owns the social media networks Facebook and Threads and the messaging apps Messenger and WhatsApp.

Instagram head Adam Mosseri also previously testified on Feb. 11 and disagreed with the case's definition of addiction, claiming that "clinical addiction" is different from "problematic use" of Instagram or users who spend "too much time on Instagram."

If you or someone you know is struggling with thoughts of suicide -- free, confidential help is available 24 hours a day, 7 days a week. Call or text the national lifeline at 988.